Azure Data Factory: Reduce Fabric Costs

Save Microsoft Fabric costs with Azure Data Factory pipelines, Fabric API suspend, Teams alerts and managed identity

Key insights

- Microsoft Fabric capacities can keep billing while idle, so cost rises quickly.

Use Azure Data Factory (ADF) pipelines to automate pausing those capacities and reduce spend during off hours. - The key advantage is running orchestration on a mounted ADF instance so compute runs outside Fabric.

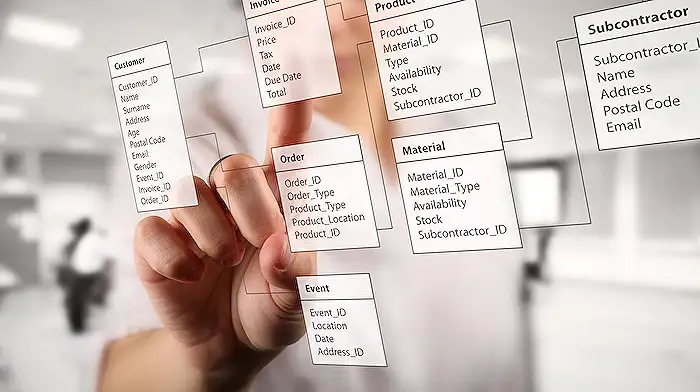

That shifts charges to consumption-based billing for ADF compute instead of continuous Fabric capacity units. - Design the pipeline to start from a simple capacity list (name, resource group, subscription ID).

Use a Lookup activity to read that list and parse the returned JSON for each capacity entry. - Use a ForEach loop to iterate capacities and perform a state check via the Fabric management API.

If the capacity is already paused, skip; if running, call the suspend flow. - Call the Fabric suspend API to pause active capacities and add a verification call to confirm the new state.

Optionally send an adaptive card to notify teams about paused capacities for audit and transparency. - Schedule with ADF triggers and grant the pipeline a managed identity plus the required RBAC permissions or a custom role to call management APIs.

Note: Fabric API may not include all workspace metadata, so the pipeline and identity setup help maintain governance and safe automation.

Pragmatic Works published a concise YouTube walkthrough that shows how to use Azure Data Factory pipelines to automatically turn off idle Microsoft Fabric capacities, and the approach promises tangible cost savings. The video, presented by Zane Goodman, explains why running orchestration in ADF can avoid continuous Fabric capacity charges and how to implement a safe suspend workflow with verification. Consequently, organizations that pay close attention to cloud spend may find this tutorial particularly useful.

Quick Summary of the Approach

The video demonstrates an ADF pipeline that loops through a list of Fabric capacity resources, checks each resource state, and calls the management API to suspend those that are running. Moreover, it shows how to verify the suspend action and optionally send alerts when a capacity is paused. In short, the pipeline automates routine shutdowns so teams do not pay for idle compute overnight or during predictable downtime.

Pragmatic Works also contrasts this solution with running native Fabric pipelines, arguing that mounted ADF executes on Azure-hosted integration runtimes rather than consuming Fabric capacity. Therefore, this hybrid model separates orchestration compute from Fabric CUs and can reduce charges for data-heavy ETL workloads. At the same time, the demo emphasizes governance by keeping monitoring and logs visible in the Fabric workspace.

Why Use ADF Instead of Fabric Pipelines

First, ADF runs on pay-per-use integration runtimes, so it does not consume provisioned Fabric capacity while executing pipelines. As a result, teams can run heavy workloads without driving up their Fabric CU consumption, which is helpful for bursty or off-hours jobs. Furthermore, ADF supports a broad range of runtimes and connectors that some organizations depend on for legacy or on-prem integrations.

However, this choice adds operational complexity because orchestration spans two control planes: Fabric and Azure Data Factory. Consequently, teams must manage mounted ADF instances and ensure the pipeline has permission to call Fabric management APIs. Still, the tradeoff often favors cost savings for tenants with predictable idle windows or expensive steady-state capacities.

How the Pipeline Works in Practice

The core pipeline uses a lookup or configuration list containing each capacity’s name, resource group, and subscription ID, and then runs a ForEach loop to process them. Inside the loop, it issues a GET to read the current state and an If condition determines whether a suspend call is necessary. When a capacity is active, the pipeline invokes the Fabric suspend API and then performs a verification call to confirm the state transition.

Additionally, the video walks through creating an adaptive card payload for Teams notifications so operators get immediate visibility when capacities change state. This notification step helps prevent surprises, and it supports quieter governance by informing owners when a capacity goes offline. Scheduling is handled with ADF triggers so the pipeline can run at predictable times such as evenings or weekends.

Security, Permissions, and Governance

To call the Fabric management endpoints, the pipeline runs under a managed identity that needs specific permissions. Consequently, the demo covers assigning a custom RBAC role with minimal permissions required to read and suspend capacities, which reduces blast radius and follows least privilege principles. Moreover, the team recommends verifying permission scope across subscriptions and resource groups to avoid failures when the pipeline runs.

Another governance consideration is logging and verification: the pipeline includes verification calls and logging outputs so operators can audit the suspend actions. This logging is essential because the Fabric API alone may not include all metadata needed to decide action safely, so the pipeline supplements API responses with local checks. Therefore, teams should test the workflow thoroughly in non-production environments before applying it to critical capacities.

Tradeoffs and Operational Challenges

The financial benefit can be significant, yet there are tradeoffs to balance: frequent on/off cycles might increase resume latency and could disrupt time-sensitive workloads. Likewise, if a capacity must be available quickly, automated shutdowns introduce risk unless paired with robust alerting and fast start procedures. Consequently, teams should model cost versus availability and set guardrails around which capacities are eligible for automated suspension.

Other challenges include API rate limits, error handling, and ensuring the pipeline behaves well during partial failures or transient network issues. Therefore, the video recommends adding retry logic, verification steps, and an alerting channel to address exceptions. Ultimately, the approach offers a practical and low-friction way to reduce Fabric spend, while demanding careful planning for permissions, testing, and operational readiness.

In closing, Pragmatic Works’ walkthrough provides a clear, implementable pattern that administrators can adapt for their environments, and it highlights both the cost advantages and the governance work required. As more teams seek to optimize cloud costs, this hybrid pattern between ADF and Microsoft Fabric represents a pragmatic compromise that balances savings with control. Viewers interested in follow-up details about the Teams alert workflow or an “auto turn-on capacities” variant may request deeper dives or example templates from the author.

Keywords

azure data factory stop fabric capacity, turn off microsoft fabric capacities, schedule fabric shutdown with adf, save money microsoft fabric capacities, adf pipeline auto shutdown fabric, microsoft fabric cost optimization, automate fabric capacity off azure, azure adf stop fabric to reduce costs