- All of Microsoft

Fabric Ontologies: AI-First Platforms?

Data Strategist & YouTuber

Microsoft Fabric expert guide to Ontologies, Lakehouse sync, AI workflows, REST API and GitHub for Fabric data engineers

Key insights

-

Fabric Ontologies in Fabric IQ define business entities and relationships so AI agents understand context across data sources.

The video explains how ontologies turn raw tables and signals into shared, human-readable concepts that reduce confusion for agents. -

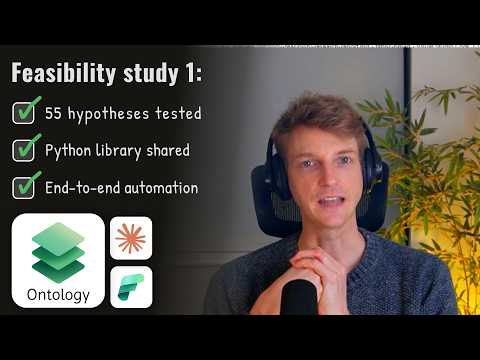

The research report covers a practical toolset: a Python library for programmatic ontology work and an Ontology REST API for discovery and management.

The presenter walks through design goals, key sections of the feasibility study, and implementation patterns used in the experiments. -

The live demo shows core functionality: Entity & Relationship CRUD, Data Bindings, and Lakehouse Sync to keep graphs and storage aligned.

You see entity creation, graph updates, GQL query execution, and how changes can propagate to a lakehouse in near real time. -

Using ontologies improves outcomes: it helps prevent AI hallucinations, enables autonomous agents, and embeds governance and traceability into agentic workflows.

The video highlights how semantic context and rules let agents act safely and explain decisions across teams. -

The stack integrates with platform features like the Model Context Protocol (MCP) and OneLake for secure, contextual execution and unified storage.

Integration points also include real-time signals and graph modeling to connect operational data, analytics, and digital twin scenarios. -

Important caveats: the project is labeled experimental and not production-ready, with CI/CD, environment switching, and graph-refresh strategies still under evaluation.

The presenter recommends treating the work as a prototype and using it for ideas rather than deploying directly to critical workloads.

In a new YouTube presentation, Will Needham (Learn Microsoft Fabric with Will) explores how semantic models can power AI-first platforms inside Microsoft Fabric. The video captures a feasibility research project that tests ontologies, graphs, and APIs against real Fabric workloads. Importantly, the author repeatedly warns the audience that this work is experimental and not ready for production use. Consequently, readers should view the demonstrations as proof-of-concept ideas rather than deployment blueprints.

Video Overview and Structure

The video opens with a clear explanation of why teams would use Fabric Ontologies and graph models to ground AI agents. Then, it walks through a research report and enumerates key sections that reveal the project’s goals. Viewers will find segment timestamps for each part, which range from ontology discovery to graph refresh techniques. The linear structure helps viewers jump to the topics that matter to them.

Tools, Code, and Live Demonstration

Needham showcases a small Python library built to manage ontologies and graphs programmatically, and he demos CRUD operations via a REST API. He also demonstrates data bindings and a Lakehouse sync function that attempts to align the semantic layer with underlying storage. During the live demo, the video shows how queries and updates run through a sample API, which gives a practical sense of how components interact. However, the presenter emphasizes that the GitHub repository contains research artifacts, not production-grade software.

The video also discusses operational pieces such as CI/CD and environment switching, which are essential for moving any prototype toward scale. The segments on executing GQL queries and automating graph refreshes are particularly thorough, and they show how one might integrate programmatic queries into automated pipelines. Furthermore, Needham explores model context patterns that link agent tooling to the Fabric environment. These parts highlight the operational mechanics developers must address beyond the initial design.

Benefits and Tradeoffs

Ontologies can reduce hallucinations by grounding large language models in a shared business vocabulary, so agents make decisions using context rather than guesswork. In addition, the use of graphs makes it easier to model relationships between customers, products, and events, which supports more accurate cross-domain reasoning. On the other hand, creating and maintaining an ontology introduces governance and maintenance costs that teams must accept. Therefore, organizations need to balance the value of better agent reasoning against the ongoing effort to curate and sync semantic models.

Real-time synchronization between ontologies and a Lakehouse can enable timely agent actions, but it also introduces consistency challenges and increased system complexity. For example, pushing updates continuously may improve freshness but can complicate transactional guarantees and debugging. Conversely, batched refreshes simplify troubleshooting at the cost of latency in agent decisions. Thus, teams should consider whether their use cases demand immediacy or steady, reproducible state.

Implementation Challenges and Risks

Needham calls out several challenges that developers will face, including schema discovery, environment switching, and secure execution of agent code via the Model Context Protocol. He also notes that automated graph updates require careful design to avoid data drift and unintended consequences in downstream agents. Additionally, integrating with features such as OneLake and real-time pipelines raises operational overhead and potential vendor-specific coupling. These risks imply that early adopters must plan for robust testing and governance before wide deployment.

The video further explains that the repository is a research starting point rather than a finished solution, and it explicitly warns against using the code in production. This caution highlights a broader tradeoff: experimental tooling accelerates learning but may not meet enterprise reliability requirements. Therefore, pilot projects should focus on validation and bounded scope, while production rollouts should build in rollback and monitoring strategies. Doing so mitigates the frequent gaps between prototype speed and enterprise resilience.

Practical Takeaways for Teams

For practitioners interested in adopting these ideas, the essential next steps are clear: prototype with limited datasets, measure the impact on agent accuracy, and invest early in governance. Equally important is to weigh the cost of ontology maintenance against the expected gains in agent trust and autonomy. Needham’s work serves as a useful roadmap for experimentation, and it surfaces the key integration points and failure modes teams should test. In short, the video invites careful experimentation rather than immediate adoption.

In conclusion, the YouTube presentation by Will Needham (Learn Microsoft Fabric with Will) offers a practical look at how ontologies and graphs could enable AI-first workflows in Microsoft Fabric. It is informative for engineers and architects who want to explore agentic systems, while also clear about the experimental nature of the code and techniques. Ultimately, organizations should balance innovation with caution and treat the project as a research foundation for future production work. As a final point, the video provides concrete ideas and timestamps that make it easier for teams to explore the parts most relevant to their needs.

Keywords

fabric ontologies for AI, AI-first fabric platform, ontology-driven fabric architecture, semantic ontologies for data fabric, knowledge graph fabric platform, enterprise fabric ontologies, using ontologies in AI platforms, ontology integration in fabric platforms